Deliverables

3D Mesh

Orthomosaic

Panorama

Capture

Resolution (GSD)

Accuracy

Image Overlap

This tutorial covers the fundamentals for capturing high-quality image sets as required for the SiteSee services. It provides the basic knowledge needed to understand the SiteSee capture procedures and is recommended reading for pilots with little or no experience in photogrammetry. It consists of two components:

- Deliverables: This section describes the outputs provided by the SiteSee services (3D mesh, orthomosaic, panorama).

- Capture: This section explains the factors that determine output quality, such as resolution (ground sampling distance), accuracy and image overlap.

Deliverables

SiteSee uses software such as ContextCapture to produce the georeferenced deliverables described in this section. This software employs the principles of photogrammetry to compute the position in space of objects (e.g. a cell tower and its equipment) by determining their spatial coordinates with the help of aerial triangulation of drone images. To help you better understand how photogrammetry works, we recommend the following video tutorials:

- Triangulation

- Aerial Photogrammetry Explained

- ContextCapture for Beginners: AeroTriangulation (ignore the parts about using the ContextCapture software)

These videos will help you understand the concepts covered in this tutorial.

3D Mesh

A 3D mesh is a 3D model of, for example, a cell tower. Since a 3D mesh is reconstructed from a point cloud, it helps to understand what a point cloud is.

Similar to pixels in a digital photograph, a point cloud is made of a large number of points. However, unlike pixels in a two-dimensional area, the points are scattered like a cloud in three-dimensional space (hence the term 'point cloud'). The image below shows the point cloud of two antenna panels.

Depending on the object and resolution, the number of points can be very large (millions or even billions of points) and each point is defined by its geographic coordinates (i.e. latitude and longitude), altitude and colour. A disadvantage of the point cloud is that, when zooming in, the points get spaced further and further apart, which makes it increasingly difficult to view the point cloud (this effect can already be seen in the image above). Because it is an accurate representation of an object's surface, a point cloud can be used to measure distances, areas and volumes. It is also georeferenced, that is, it can be used to obtain the coordinates and altitude of the objects and features shown in it.

A 3D mesh is computed based on an underlying point cloud by approximating the point cloud surface with a mesh of small triangles (hence the term '3D mesh'). Because all triangles are connected, a 3D mesh is visually more attractive and easier to view than a point cloud. And like the point cloud, it is georeferenced and provides an accurate representation of an object's surface. The image below shows the 3D mesh of the antenna panels shown in the point cloud above. As can be seen, it offers a more attractive representation similar to a conventional photograph.

Orthomosaic

An orthomosaic (also referred to as orthophoto) provides a bird's-eye view of a large area (similar to the satellite view in Google Maps). It is constructed by stitching many aerial photographs together, and it is geometrically corrected (i.e. orthorectified) to achieve the same uniform scale as a map. Like the 3D mesh, an orthomosaic is georeferenced. However, because it is a two-dimensional representation, it can only be used to determine the coordinates of objects (i.e. not their altitude) and to measure distances and areas (i.e. not volumes). Note that orthomosaics are an optional deliverable, that is, whether or not an orthomosaic capture is required depends on the job specifications.

Panorama

The SiteSee services include 360-degree spherical panoramas. Unlike the panoramas obtained from conventional cameras, spherical panoramas allow not only horizontal but also vertical panning to a bird's-eye view. Panoramas are not georeferenced and cannot be used for measurements. Note that panoramas are an optional deliverable, that is, whether or not a panorama capture is required depends on the job specifications.

Capture

The SiteSee services use state-of-the-art processing based on sophisticated AI algorithms that require very high quality image sets captured to our specifications. Our pilots are required to adhere strictly to the procedures outlined in our capture guides. These guides assume, however, that readers have a basic understanding of image acquisition concepts such as resolution, ground sampling distance, accuracy, overlaps, etc. This section provides an introduction to these concepts.

Resolution (GSD)

The resolution of georeferenced deliverables (i.e. a 3D mesh or a orthomosaic) is determined by the ground sampling distance (GSD) of a capture. The GSD is defined as the linear distance on the ground covered by one image pixel and usually is expressed in centimetres per pixel. For example, a GSD of 5 cm/px means that one pixel in the image covers a linear distance on the ground of 5 centimetres and hence an area of 25 square centimeters. Therefore, the larger the GSD, the lower the resolution.

The term 'ground sampling distance' is somewhat misleading, because it suggests that resolution is always determined relative to the ground. This is, however, not the case. For example, when capturing a cell tower, the camera is pointing at the tower - not the ground. So it would be more accurate to say that in this case resolution is determined relative to the surface of the object of interest, and hence 'surface sampling distance' would be a more accurate term. For the sake of following conventions, we will keep using the term 'ground sampling distance', but it should be remembered that 'ground' might refer to non-horizontal surfaces such as the side of a tower.

The GSD is determined by the camera’s sensor resolution, focal length and either

- the capture altitude above ground level (AGL) for orthomosaics, or

- the capture distance from the object of interest for 3D meshes.

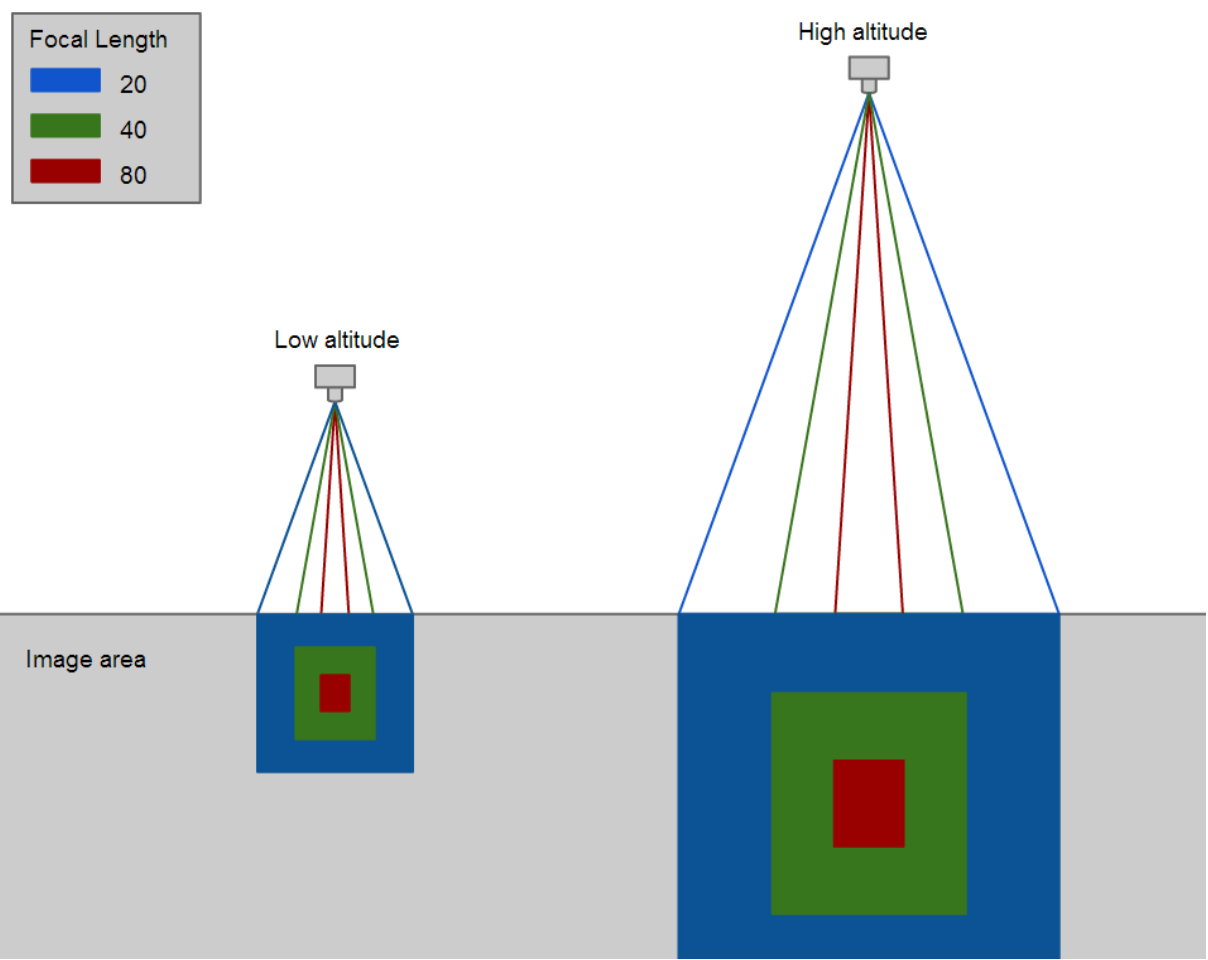

The figure below illustrates these relationships. As can be seen, a higher altitude and a shorter focal length (i.e. the use of a wide-angle lens) result in a larger GSD and thus lower resolution.

Accuracy

There are two types of accuracy that need to be distinguished:

- Absolute accuracy (also referred to as external accuracy): Absolute accuracy describes the degree to which the position of an object in an orthomosaic or 3D mesh (e.g. a cell tower) corresponds to the object's actual position in the real world.

- Relative accuracy (also referred to as internal accuracy): Relative accuracy describes how accurately the features of an object in an orthomosaic or 3D mesh (e.g. the antenna panels of a cell tower) are positioned relative to each other.

Here a few examples to help you understand the difference:

- The location of the 3D mesh of a cell tower is about 3 meters northeast of the tower's actual real-world location: low absolute accuracy.

- The elevation of the 3D mesh of an antenna panel is about 2 m higher than the panel's actual elevation: low absolute accuracy.

- The base of a cell tower in an orthomosaic is located exactly where it is in the real world: high absolute accuracy.

- The height of the 3D mesh of a cell tower is the same as the tower's real height: high relative accuracy.

- The distance of an antenna panel from the tower base as measured in the 3D mesh is about 10 percent less than measured onsite: low relative accuracy.

Resolution and accuracy are not the same. Resolution is only one factor that determines accuracy. Also important is the precision with which the position of the cameras used for the capture is known. The latter depends on the precision of the positioning system used during image capture. Unfortunately, the GPS system of consumer-grade drones is only about as precise as that of a smartphone and thus results in low accuracy. Far better results can be achieved with a professional-grade surveying system such as ground control points (GCPs).

GCPs are used by SiteSee instead of drone GPS data to improve both the absolute and relative accuracy. For example, when using GCPs, the relative accuracy of 3D meshes processed by SiteSee is up to 20 times better. Detailed information on the accuracy of the 3D meshes produced by SiteSee is provided in this document.

In the field, GCPs such as the one shown in the image below are placed around the object of interest (e.g. a cell tower) and captured together with it. While the capture is in progress, these points automatically log their precise position (typically to millimetre accuracy).

Image Overlap

After watching the video tutorial ContextCapture for Beginners: AeroTriangulation recommended in the section Deliverables above, you will know that, to determine the position of an object in space using photogrammetry, it must be captured in at least three different images. This is the absolute minimum; and if the camera positions and orientations are not known with very high accuracy (which is usually the case when a consumer-grade drone is used for the capture) or if the object to be captured is complex, much higher overlaps are required. Sufficient image overlaps are the key to a successful capture. If overlaps are too low, the quality of the resulting 3D mesh or orthomosaic will also be low, or processing will fail altogether.

In the image sequence below, the fork in the dirt road appears four times. This equals an overlap of about 75 percent, which is typically used when capturing images for an orthomosaic. Complex objects such as cell towers require much higher image overlaps of over 90 percent.

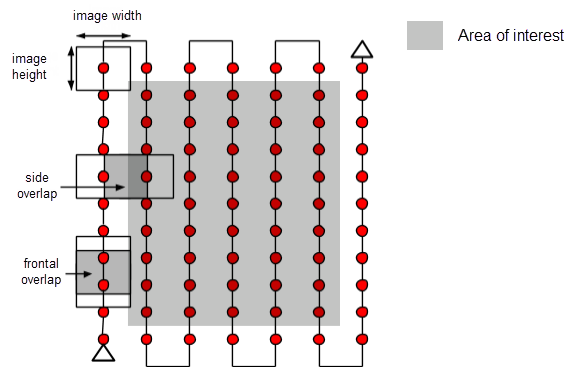

There are different types of overlaps, which are referred to by how the image frame is oriented relative to the flight path. They are illustrated in the image below which shows a grid flight path used to capture an orthomosaic (the side overlap is often also referred to as lateral overlap).

For three-dimensional vertical objects such as cell towers, the terms vertical overlap and horizontal overlap are used instead (see image below).

The amount of overlap is usually specified as a percentage. For example, an overlap of 80 percent means that four fifths of each image pair overlap, that is, the same feature or object would appear in five consecutive images. An overlap of 75 percent means that three quarters overlap, that is, the same feature or object would appear in four consecutive images.

We would like to conclude this tutorial by again stressing the importance of sufficient overlaps. In our experience, novice pilots tend to underestimate the importance of correct image overlaps, and some attempt to reduce image set size and save batteries by reducing overlaps. This is not good practice and, as mentioned above, leads to inferior processing results. The overlaps specified by SiteSee have been tested thoroughly and need to be adhered to strictly. Failure to do so is one of the main causes of processing failure.